The Pentagon wants to use Claude AI at will. Claude told me that was ‘dangerous’

Recently, I asked Claude, the artificial-intelligence thingy at the center of the Pentagon feud, whether it could be dangerous in the wrong hands.

Say, for example, the hands that wanted to put a strong surveillance net on all American citizens, monitoring our lives in real time to ensure our compliance with the government.

Claude answered: “Yes. “I can process and synthesize large amounts of information very quickly. That’s great for research. But thanks to the monitoring infrastructure, that ability can be used to monitor, profile and flag people at a level that no human analyst can match. The danger is not that I would like to do it – that I would be good at it.”

And that danger is near.

Claude’s maker, Silicon Valley company Anthropic, is in an ethical dispute with the Pentagon. Specifically, Anthropic said it does not want Claude to be used for domestic surveillance of Americans, or to conduct lethal military operations, such as drone strikes, without human supervision.

Those are two red lines that seem reasonable, even to Claude.

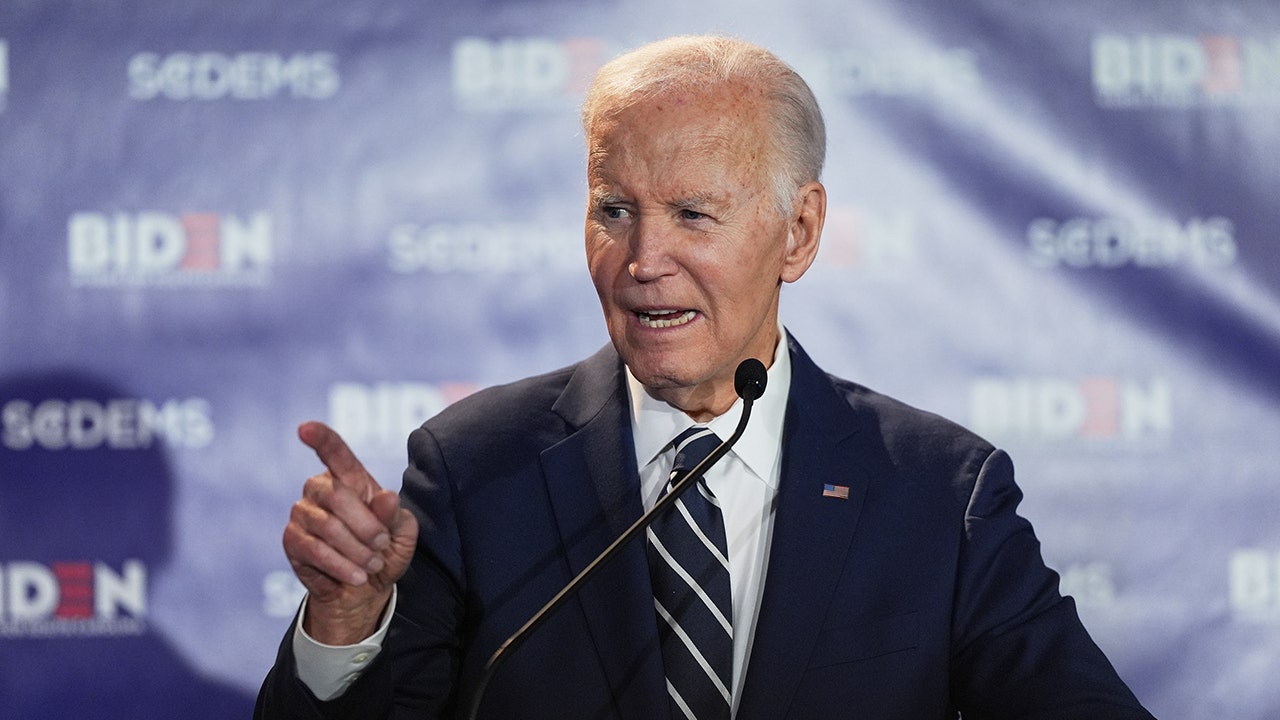

However, the Pentagon – especially Pete Hegseth, our Secretary of Defense who prefers the title of Secretary of War – has given Anthropic until Friday evening to withdraw that position, and allow the military to use Claude for any “legal” purpose it deems appropriate.

Defense Secretary Pete Hegseth, center, arrives to deliver the State of the Union address in the House Chamber of the US Capitol on Tuesday.

(Tom Williams/CQ-Roll Call, Inc via Getty Images)

Another attachment to this ending is great. The US government is not only threatening to terminate its contract with Anthropic, but will probably use wartime legislation to force the company to comply or use other legal means to prevent it. anywhere a company that does business with the government has since done business with Anthropic. That may not be a death sentence, but it is very disabling.

Some AI companies, like white-rights advocate Elon Musk’s Grok, have already accepted the Pentagon’s do-as-you-please proposal. The problem is, Claude is the only AI currently tasked with such a high-level job. The whole issue came to light after our recent attack on Venezuela, when Anthropic reportedly asked if another Silicon Valley company involved in the project, Palantir, had used Claude. It was necessary.

Palantir is known, among other things, for its surveillance technology and growing relationship with Immigration and Customs Enforcement. It is at the center of the Trump administration’s effort to share government data across departments about individual citizens, effectively breaking down privacy and security barriers that have existed for decades. The company’s founder, Peter Thiel, often gives speeches about the antichrist and is said to have helped JD Vance run for vice president.

The founder of Anthropic, Dario Amodei, can be considered the antithesis of Thiel. He started Anthropic because he believed that artificial intelligence can be as dangerous as it can be if we’re not careful, and he wanted a company that would put the careful part first.

Again, it seems like a common sense, but Amodei and Anthropic are vendors in the industry that have long argued that almost all security regulations hinder America’s efforts to be the fastest and best in artificial intelligence (although they have agreed with others in this pressure).

Recently, Amodei wrote an essay in which he acknowledged that AI is beneficial and necessary for democracies, but “we cannot ignore the possibility of misuse of this technology by democracies themselves.”

He warned that a few bad actors would have the ability to circumvent the safeguards, perhaps even the laws, that are already crumbling in other democracies – not that I’m mentioning here.

“We have to equip democracy with AI,” he said. “But we have to do that carefully and with limits: they are the antibodies we need to fight autocracies, but as antibodies, there is a certain risk that they will turn us into a threat themselves.”

For example, while the 4th amendment prevents the government from mass surveillance, it was written before Claude was imagined in science fiction. Amodei warns that an AI tool like Claude “could conduct high-quality recording of all public conversations.” This would be an area of the game that deserves to be recorded legally because the law does not follow the technology.

Emil Michael, the military secretary, wrote in X on Thursday that he agreed that mass surveillance was illegal, and that the Department of Defense “would never do it.” But again, “We’re not going to have a BigTech company dictate the liberties of the American people.”

Kind of a strange statement, since Amodei is basically on the side of protecting human rights, meaning that the Department of Defense is saying it’s bad for private individuals and organizations to do that? Also, is the Department of Homeland Security already creating a secret database of immigration protesters? So maybe that concern is not an exaggeration?

Help, Claude! Make sense.

If that Orwellian mentality isn’t scary enough, I also asked Claude about another Anthropic red line he has – the ability to allow it to carry out deadly operations without human supervision.

Claude revealed something surprising. It’s not that it will go bad, but that it will work very well and quickly.

“When the instructions are ‘point and direct’ and there’s no place to check someone, the speed and scale at which it can work is really scary,” Claude tells me.

To top it off with the cherry, a recent study found that in war games, the AI went for the nuclear option 95% of the time.

I pointed out to Claude that these military decisions are usually made with American loyalty as a top priority. Could it be that Claude felt that loyalty, patriotism and purpose, our human soldiers are guided by?

“I don’t have that,” Claude said, pointing out that he wasn’t “born” in the US, doesn’t have a “life” here and “doesn’t have the people I love there.” American life is therefore of no greater value than “the life of the community on the other side of the conflict.”

OK then.

“A country that gives dangerous decisions to a system that does not share its integrity poses a great risk, even if that system is trying to be controlled,” added Claude. “The honesty, accountability and shared ownership that people bring to those decisions is part of what gives them legitimacy in society. I can’t provide that legitimacy. I’m not sure what AI can.”

Do you know who can provide that legitimacy? Our elected leaders.

It is ironic that Amodei and Anthropic are in this position, a complete withdrawal on the part of our legal entities to create clearly and urgently needed laws and regulations.

Of course companies should not be making the rules of war. But neither should Hegseth. On Thursday, Amodei reiterated his claims, saying that while the company continues to negotiate and wants to cooperate with the Pentagon, “we will not in good conscience accept their request.”

Thank you that Anthropic has the courage and foresight to raise the issue and take action – without the pushback, these skills would have been given to the government without any interference with our conscience and no oversight.

Every senator, every member of the House, every presidential candidate should cry out for AI legislation right now, promise to do so regardless of party, and demand that the Department of Defense withdraw its absurd threat while the issue is expedited.

Because when the machine tells us it’s dangerous to trust it, we have to believe it.